An AI researcher who has been warning about the technology for over 20 years say...

source link: https://finance.yahoo.com/news/ai-researcher-warning-technology-over-114317785.html

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

An AI researcher who has been warning about the technology for over 20 years says we should 'shut it all down,' and issue an 'indefinite and worldwide' ban

One AI researcher who has been warning about the tech for over 20 years said to "shut it all down."

Eliezer Yudkowsky said the open letter calling for a pause on AI development doesn't go far enough.

Yudkowsky, who has been described as an "AI doomer," suggested an "indefinite and worldwide" ban.

An AI researcher who has warned about the dangers of the technology since the early 2000s said we should "shut it all down," in an alarming op-ed published by Time on Wednesday.

Eliezer Yudkowsky, a researcher and author who has been working on Artificial General Intelligence since 2001, wrote the article in response to an open letter from many big names in the tech world, which called for a moratorium on AI development for six months.

The letter, signed by 1,125 people including Elon Musk and Apple's co-founder Steve Wozniak, requested a pause on training AI tech more powerful than OpenAI's recently launched GPT-4.

Yudkowsy's article, titled "Pausing AI Developments Isn't Enough. We Need to Shut it All Down," said he refrained from signing the letter because it understated the "seriousness of the situation," and asked for "too little to solve it."

He wrote: "Many researchers steeped in these issues, including myself, expect that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die."

He explained that AI "does not care for us nor for sentient life in general," and we're far from instilling those kinds of principles in the tech at present.

Yudkowsky instead suggested a ban that is "indefinite and worldwide" with no exceptions for governments or militaries.

"If intelligence says that a country outside the agreement is building a GPU cluster, be less scared of a shooting conflict between nations than of the moratorium being violated; be willing to destroy a rogue data center by airstrike," Yudkowsky said.

Yudkowsky has for many years been issuing bombastic warnings about the possibly catastrophic consequences of AI. Earlier in March he was described by Bloomberg as an "AI Doomer," with author Ellen Huet noting that he has been warning about the possibility of an "AI apocalypse" for a long time.

Fortune

FortuneGoogle CEO won’t commit to pausing A.I. development after experts warn about ‘profound risks to society’

Sundar Pichai said A.I. could lead to “disinformation at scale” and humanity's destruction, but poked holes in a proposal to slow its development.

22h ago Fortune

FortuneThe $300,000 starter home is going extinct: ‘A renter society not because of choice but because of force’

Housing economist Ali Wolf is worried by a growing "imbalance between the haves and have-nots in the economy."

1d ago TipRanks

TipRanksSeeking at Least 8% Dividend Yield? Goldman Sachs Suggests 2 Dividend Stocks to Buy

One thing is certain in the stock markets lately: their inherent uncertainty is taking charge, and high volatility is here to stay. For months, investors and economists have worried about the recessionary effects of the Federal Reserve’s anti-inflationary interest rate hikes – but the recent bank crisis has added another layer of concern to an already tumultuous situation. Now, we’re dealing with the fallout from that crisis, and inflation and interest rates both remain high. It’s a textbook cas

1d ago Bloomberg

BloombergEl-Erian: Warning Signs Are Now Flashing Yellow

Mohamed El-Erian, chief economic adviser at Allianz and Bloomberg Opinion columnist, says the worst of the recent turmoil in banking is over, but there are still warning signs. "We're going from liquidity to capital, and from financial contagion to economic contagion," El-Erian said in an interview on Bloomberg Television in Cernobbio, Italy. El-Erian's opinions are his own. Follow Bloomberg for business news & analysis, up-to-the-minute market data, features, profiles and more: http://www.bloomberg.com Connect with us on... Twitter: https://twitter.com/business Facebook: https://www.facebook.com/bloombergbusiness/ Instagram: https://www.instagram.com/quicktake/?hl=en

2d ago Bloomberg

BloombergUS Power Plant Firm Goes Bankrupt After Winter Storm Penalties

(Bloomberg) -- Lincoln Power LLC, the owner of two Illinois power plants, filed for bankruptcy after its financial strain was exacerbated by nearly $39 million in penalties levied by the biggest US electric-grid operator. Most Read from BloombergVeteran Money Managers Bail on Stock Rally With Fed Hawks FlyingParents Are Paying Consultants $750,000 to Get Kids Into Ivy League SchoolsGlobal Food Supply Risks Rise as Key Traders Leave RussiaTrump Weighs Asking to Move NY Criminal Case to Staten Isl

22h ago SmartAsset

SmartAssetProcrastinators, Rejoice: The 6.89% I bonds Will Beat the Old 9.62% Bonds in Just 4 Years

With a yield of 9.62%, the recently expired Series I bond was understandably popular. With interest rates rising, bond funds are down this year and banks continue to offer miserly rates on deposit accounts. So it's no wonder that a … Continue reading → The post It Pays to Procrastinate: The New 6.89% I bonds Will Beat the Old 9.62% Bonds in Just 4 Years appeared first on SmartAsset Blog.

1d ago Fortune

FortuneHow much you need to earn to afford a $500,000 home

The average U.S. home sales price hit $535,800 in 2022. We asked experts how much you need to earn to afford a home around that price point.

1d ago Investor's Business Daily

Investor's Business DailyThree Stocks Turn $10,000 To $25,265 In Just 3 Months

Investors enjoyed a small S&P 500 rally in March. But there were much more lucrative places to put money in the month.

1d ago Fortune

Fortune‘Dr. Doom’ Nouriel Roubini warns economic ‘trilemma’ is making a financial crash inevitable

Troubled regional lenders will starve indebted businesses and households of credit, trigger a hard landing, and turn a liquidity crisis into a balance sheet crisis, warns the noted economist.

1d ago SmartAsset

SmartAssetBaby Boomers, You Need This Much Money to Retire Comfortably

The Baby Boomer generation is reaching retirement age in record numbers. With more Boomers retiring on a daily basis, it helps to understand how prepared they are to leave their jobs for good. In this article, we’ll discuss the average … Continue reading → The post Average Retirement Savings for Baby Boomers appeared first on SmartAsset Blog.

1d ago Bloomberg

BloombergSoaring Auto Loan Rates Are the Latest Roadblock for Car Sales

(Bloomberg) -- Just when it seemed like things were getting back to normal at Rhett Ricart’s Columbus, Ohio, car dealerships — after pandemic-induced inventory shortages and runaway price inflation — a new obstacle emerged to keep buyers from closing the deal: soaring interest rates on auto loans.Most Read from BloombergVeteran Money Managers Bail on Stock Rally With Fed Hawks FlyingParents Are Paying Consultants $750,000 to Get Kids Into Ivy League SchoolsGlobal Food Supply Risks Rise as Key Tr

7h ago Investor's Business Daily

Investor's Business DailyDow Jones Rises 150 Points After Cool Inflation Data; 6 Stocks To Buy And Watch

The Dow Jones rose Friday after key inflation data, with the release of the PCE price index, the Fed's preferred measure of inflation.

1d ago Zacks

ZacksThe Charles Schwab Corporation (SCHW) Stock Sinks As Market Gains: What You Should Know

The Charles Schwab Corporation (SCHW) closed the most recent trading day at $52.38, moving -0.17% from the previous trading session.

22h ago Investor's Business Daily

Investor's Business DailyCheap Stocks To Buy: Should You Watch These 5 Growth Stocks?

Regardless of what stage of the market cycle we're in, some folks never tire of searching for cheap stocks to buy. If it has thin trading volume, the fund manager will have an awfully tough time accumulating shares — without making a big impact on the stock price. IBD research also finds that dozens, if not hundreds, of great stocks each year do not start out as penny shares.

20h ago Fortune

FortuneVirgin Orbit’s sudden collapse is the latest in a series of high-risk failures by Richard Branson

The satellite deployment startup founded by Branson is letting go of the bulk of its staff, citing an “inability to secure meaningful funding.”

1d ago Investor's Business Daily

Investor's Business DailyMarket Rally Builds Momentum; Tesla Breaks Out With Deliveries Due

The market rally is building momentum with more stocks flashing buy signals. Tesla deliveries are due as EV rivals report Q1 sales.

3h ago Barrons.com

Barrons.comThe Newest ‘Bubble’ Is in Money-Market Funds

People are rushing into money-market funds. Total assets held in money-market funds, which are investment vehicles that buy cash-like securities such as short-term Treasury bills, recently reached close to $5.5 trillion, according to RBC. Earlier this year, money-market fund assets stood at roughly $4.5 trillion, a level at which people stopped pouring more money into those funds a few times in the past few years, opting instead to buy other assets like stocks.

1d ago The Wall Street Journal

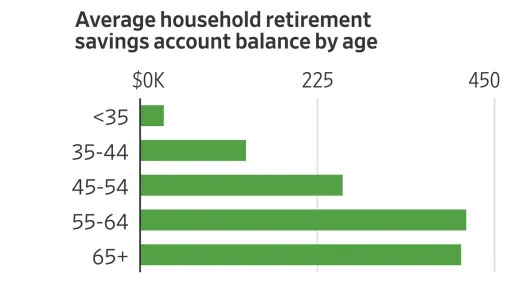

The Wall Street JournalHere’s What Retirement Looks Like in America in Six Charts

Americans spend decades saving for retirement, never quite sure how much is enough or what sort of life that money will ultimately buy. To benchmark your retirement plans—including your savings and spending and how you spend your time—one place to start is by looking at how your numbers stack up against Americans overall. The biggest source of retirement income for many Americans is the nest egg built up during their careers.

1d ago Barrons.com

Barrons.comNIO, Li Auto, XPeng March Deliveries Gave Investors What They Want.

March deliveries from the three Chinese EV makers fell within the companies' guided ranges. Combined deliveries were up month over month and year over year.

6h ago Barrons.com

Barrons.comActivision Stock Is Too Cheap: Analysts. They Expect Sale to Microsoft to Close.

Wall Street "currently undervalues" the likelihood that regulators will allow the merger to be completed, according to New Street Research.

1d ago Bloomberg

BloombergVeteran Money Managers Bail on Stock Rally With Fed Hawks Flying

(Bloomberg) -- Optimism about imminent rate cuts is stirring animal spirits — and unease — in equal measure at the end of a turbulent quarter in markets. Most Read from BloombergVeteran Money Managers Bail on Stock Rally With Fed Hawks FlyingParents Are Paying Consultants $750,000 to Get Kids Into Ivy League SchoolsGlobal Food Supply Risks Rise as Key Traders Leave RussiaTrump Weighs Asking to Move NY Criminal Case to Staten IslandTrump Faces Fingerprints, Mug Shot After Dramatic IndictmentPromi

9h ago Benzinga

BenzingaJPMorgan's Bob Michele Issues Dire Warning On Rally Of Risk Assets, Says Recession Is Inevitable

Bob Michele, the chief investment officer of JPMorgan Asset Management, has warned of an economic downturn, saying that markets are headed for a rally before an inevitable slowdown. In an interview with Bloomberg on Friday, Michele says risk assets will rise in the next quarter as they did during the Great Recession. "In the next quarter, we could see risk assets rally. You could have a feel-good period, and then the reality catches up," Michele said. "If we've been taught anything this month, y

4h ago Yahoo Finance

Yahoo FinanceEV chargers 'on trajectory' to be as common as gas stations, EVgo CEO says

Soaring shares in charging company EVgo offer some respite for investors who suffered through a rough 2022. EVgo is still unprofitable. Investors are looking for any sign that EVgo, and charging networks in general, are poised to explode in growth.

1d ago Investor's Business Daily

Investor's Business DailyAs Nvidia Stock Firms Up, Option Trade Could Return Nearly $300

With positive flow in both stocks in the technology sector and options making more bullish bets, it's the ideal time to take a look at Nvidia.

1d ago Investor's Business Daily

Investor's Business DailyThese Five IBD 50 Chip Stocks Hold Surprisingly Low P/E Ratios; All Are Setting Up Buy Points

These five semiconductor growth stocks have low P/E ratios and sound fundamentals and have gained between 19% and 50% this year so far.

1d ago Fortune

Fortune‘Everyone is kind of tired and has given up’ on COVID. But this new variant is ‘one to watch,’ the WHO says

Here’s what we know so far about the latest variant to raise eyebrows—and the first to do so in several months.

23h ago Fortune

FortuneTrump NFT sales skyrocket more than 400% on news of his indictment

He became the first former U.S. president to be indicted for a crime on Thursday.

23h ago Yahoo Finance

Yahoo FinancePutin’s getting nervous about Russia’s sinking economy

Russia's arrest of a journalist who detailed its economic woes is an act of desperation—and probably not the last one.

2d ago Investor's Business Daily

Investor's Business DailyTop 5 China Stocks To Buy And Watch

A growing number of China stocks are setting up or flashing buy signals, as the Chinese economy gains momentum.

7h ago Investor's Business Daily

Investor's Business DailyChina EV Sales: Li Auto, Nio, XPeng Report Deliveries With Tesla Rival BYD Due

After a rough start to 2023, China EV sales are generally rebounding. Li Auto, Nio and XPeng reported March sales. Tesla rival BYD is on tap.

7h ago Bankrate

Bankrate6 common reasons your investments may trigger an IRS audit

And what investors can do if they’re contacted by the IRS.

1d ago Zacks

ZacksTime to Buy Cal-Maine Foods (CALM) Stock After Strong Earnings?

Let's see if investors should buy into the rally with Cal-Maine stock up 9% following its stellar fiscal third-quarter results on Tuesday.

2d ago Barrons.com

Barrons.comWeek’s Best: Fisher Investments Heads to Texas

Fisher Investments is relocating its headquarters to Texas from Washington state after the path was cleared for a capital-gains tax on individuals in Washington. The wealth and investment management firm already has an office in Plano, Texas, a suburb of Dallas. Fisher announced its decision the same day that Washington’s State Supreme Court ruled that a capital-gains tax approved by lawmakers in 2021 was constitutional.

23h ago Zacks

ZacksDon't Ignore the Strength of These 3 Large-Caps

Large-cap technology has thrust itself back into style in 2023, with buyers stepping up at every turn. Can the rally sustain itself?

1d ago Investor's Business Daily

Investor's Business DailyHow To Invest: Nvidia, Tesla Reveal 3-Step Routine For Bull And Bear Markets

Successful stock investing starts with having rules and a routine. Learn how to invest in stocks following a simple three-step routine.

1d ago 16h ago

16h ago Fortune

Fortune‘Car Guy’ Bill Gates just rode in an autonomous vehicle across London and says the sector is reaching a ‘tipping point’ in the next decade

The billionaire said autonomous vehicles can save time, money, and reduce inequities in transport access—but it'll be decades before that happens.

22h ago Zacks

ZacksInternational Business Machines Corporation (IBM) is Attracting Investor Attention: Here is What You Should Know

Recently, Zacks.com users have been paying close attention to IBM (IBM). This makes it worthwhile to examine what the stock has in store.

1d ago Benzinga

BenzingaNASDAQ-Traded REIT Loses 89% Of Value In 17 Months

Industrial Logistics Properties Trust (NASDAQ: ILPT) traded at $27 in October 2021 and now goes for $2.97 — one of the steepest declines among real estate investment trusts (REITs). The company is reeling from the effects of interest-rate hikes and a general drop in the value of real estate. With a market capitalization of $181 million, it’s not a major REIT, but that big of a loss in value gets noticed. Funds from operations (FFO) over the most recent 12 months was negative 289% and for the pas

1d ago Barrons.com

Barrons.comTesla Deliveries Are Coming. The Stakes Are High After the Stock Had a Monster Quarter.

Wall Street expects first-quarter deliveries of about 420,000 units. Investors should pay attention to that and the total production figure.

22h ago

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK