#夏日挑战赛# Flannel HOST-GW 跨节点通信-开源基础软件社区-51CTO.COM

source link: https://ost.51cto.com/posts/14355

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

#夏日挑战赛# Flannel HOST-GW 跨节点通信 原创 精华

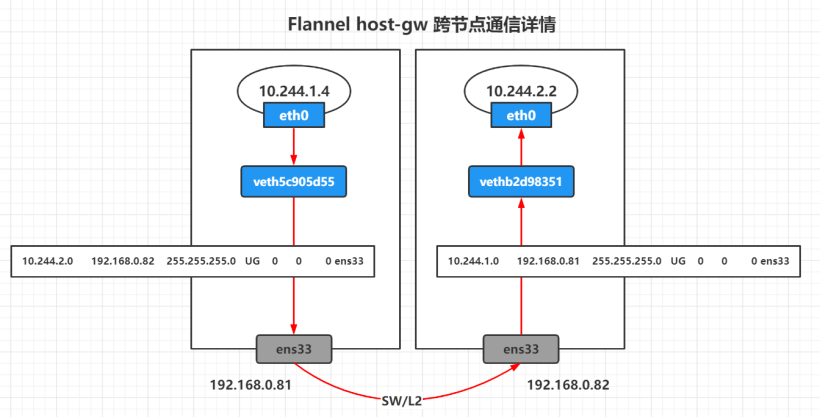

使用 host-gw 通过远程机器 IP 创建到子网的 IP 路由。需要运行 flannel 的主机之间的二层互联。

Host-gw 是通过二层互联,利用了linux kernel 的 FORWARD 特性,报文不经过额外的封装和 NAT,所以提供了良好的性能、很少的依赖关系和简单的设置。

部署 host-gw 模式,只需要将 "Type": "vxlan" 更换为 "Type": "host-gw"

wget https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

sed -i "s/vxlan/host-gw/g" kube-flannel.yml

跨节点通信

[root@master ~]# kubectl create deployment cni-test --image=burlyluo/nettoolbox --replicas=2

[root@master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-test-777bbd57c8-7jxzs 1/1 Running 0 2m36s 10.244.1.4 node1.whale.com <none> <none>

cni-test-777bbd57c8-xb2pp 1/1 Running 0 2m36s 10.244.2.2 node2.whale.com <none> <none>

pod1 和 veth 网卡的信息

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-7jxzs -- ethtool -S eth0

NIC statistics:

peer_ifindex: 7

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-7jxzs -- ifconfig eth0

eth0 Link encap:Ethernet HWaddr 7A:0B:D4:4C:C5:68

inet addr:10.244.1.4 Bcast:10.244.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:6 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:488 (488.0 B) TX bytes:42 (42.0 B)

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-7jxzs -- route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.1.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.1.1 255.255.0.0 UG 0 0 0 eth0

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

[root@node1 ~]# ip link show | grep ^7

7: veth5c905d55@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node1 ~]# ip link show veth5c905d55

7: veth5c905d55@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether 9e:1e:ab:74:dc:ac brd ff:ff:ff:ff:ff:ff link-netnsid 2

[root@node1 ~]# ifconfig cni0

cni0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.244.1.1 netmask 255.255.255.0 broadcast 10.244.1.255

inet6 fe80::5cc1:b9ff:fea7:b856 prefixlen 64 scopeid 0x20<link>

ether 5e:c1:b9:a7:b8:56 txqueuelen 1000 (Ethernet)

RX packets 13811 bytes 1120995 (1.0 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 13789 bytes 1285224 (1.2 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@node1 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 ens33

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

10.244.2.0 192.168.0.82 255.255.255.0 UG 0 0 0 ens33

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

pod2 和 veth 网卡的信息

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-xb2pp -- ethtool -S eth0

NIC statistics:

peer_ifindex: 5

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-xb2pp -- ifconfig eth0

eth0 Link encap:Ethernet HWaddr 32:00:56:84:0A:28

inet addr:10.244.2.2 Bcast:10.244.2.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1178 (1.1 KiB) TX bytes:42 (42.0 B)

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-xb2pp -- route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.2.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.2.1 255.255.0.0 UG 0 0 0 eth0

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

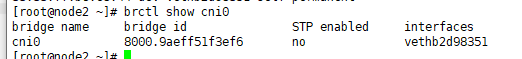

[root@node2 ~]# ip link show | grep ^5

5: vethb2d98351@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node2 ~]# ip link show vethb2d98351

5: vethb2d98351@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether fa:c9:75:b6:e8:44 brd ff:ff:ff:ff:ff:ff link-netnsid 0

[root@node2 ~]# ifconfig cni0

cni0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.244.2.1 netmask 255.255.255.0 broadcast 10.244.2.255

inet6 fe80::98ef:f5ff:fe1f:3ef6 prefixlen 64 scopeid 0x20<link>

ether 9a:ef:f5:1f:3e:f6 txqueuelen 1000 (Ethernet)

RX packets 4 bytes 168 (168.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 12 bytes 1036 (1.0 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

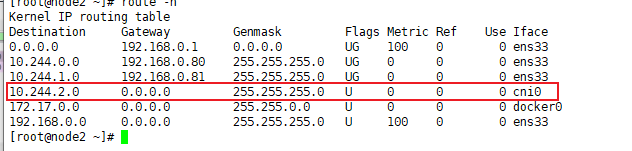

[root@node2 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 ens33

10.244.1.0 192.168.0.81 255.255.255.0 UG 0 0 0 ens33

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

跨节点通信数据流向图

host-gateway 的模式的报文没有经过任何封装,而是通过宿主机路由的方式,直接指向到目的pod 所在的node 的ip地址为网关,所以这也是为什么 host-gw模式 要求要二层网络互通,因为网关是二层的出口,如果不在同一个二层,网关的地址就不可能通过二层的方式找到,就没有办法通过路由的方式找到目的地址。

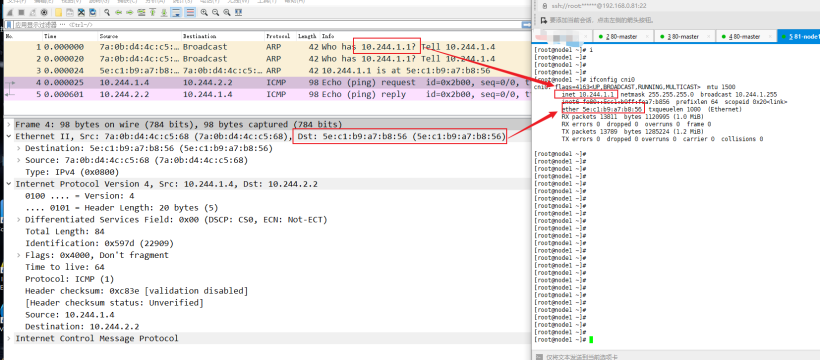

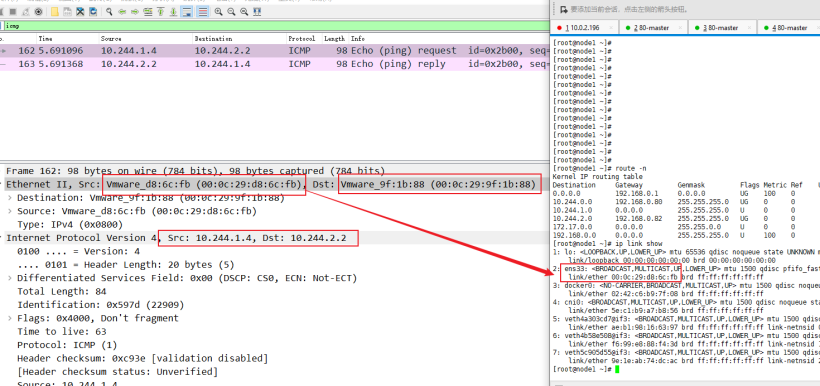

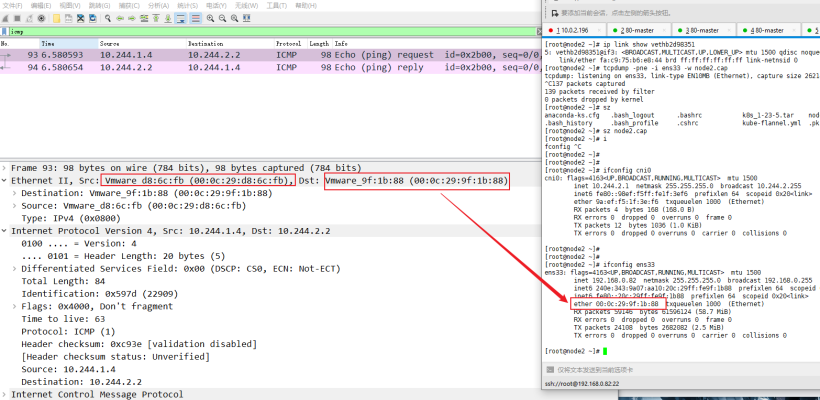

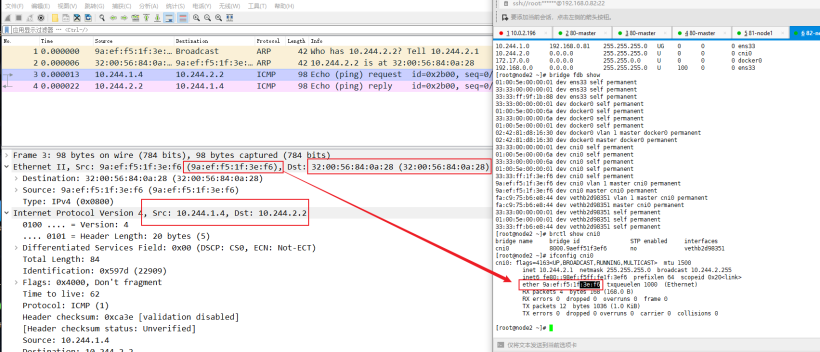

还是和 vxlan 模式一样,我们只针对于 pod eth0 网卡抓包,然后通过宿主机的路由表查看数据流量。

pod1 10.244.1.4 node1

pod2 10.244.2.2 node2

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-7jxzs -- ping -c 1 10.244.2.2

pod1.cap

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-7jxzs -- tcpdump -pne -i eth0 -w pod1.cap

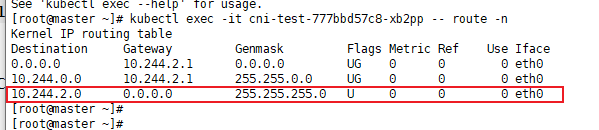

pod2 属于不同的网段,所以就需要查看 pod 内部的路由表,走如下图所示这条交换路由

而 pod 对端的网卡是桥接到 cni0 网桥上,所以网关地址就是 cni0 网桥的地址。

node1.cap

[root@node1 ~]# tcpdump -pne -i ens33 -w node1.cap

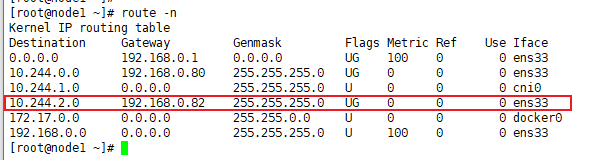

在node1 的路由表中,我们看到目的地址为 10.244.2.0/24 网段的网关就是 node2 的ip 地址,所以通过路由转发的形式(net.ipv4.ip_forward = 1),发送到目的地址。

通过查看报文,也是并没有更改源目的的 IP地址。

node2.cap

[root@node2 ~]# tcpdump -pne -i ens33 -w node2.cap

node2 节点的解封装过程就和 node1 封装过程一样,通过路由表,将报文转发到 cni0 网桥上,pod2 对端的veth 网卡就是 cni0网桥(虚拟交换机) 的接口,所以可以直接将报文发送到 pod2 对端的veth 网卡,然后发送给pod2.

pod2.cap

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-xb2pp -- tcpdump -pne -i eth0 -w pod2.cap

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK