2

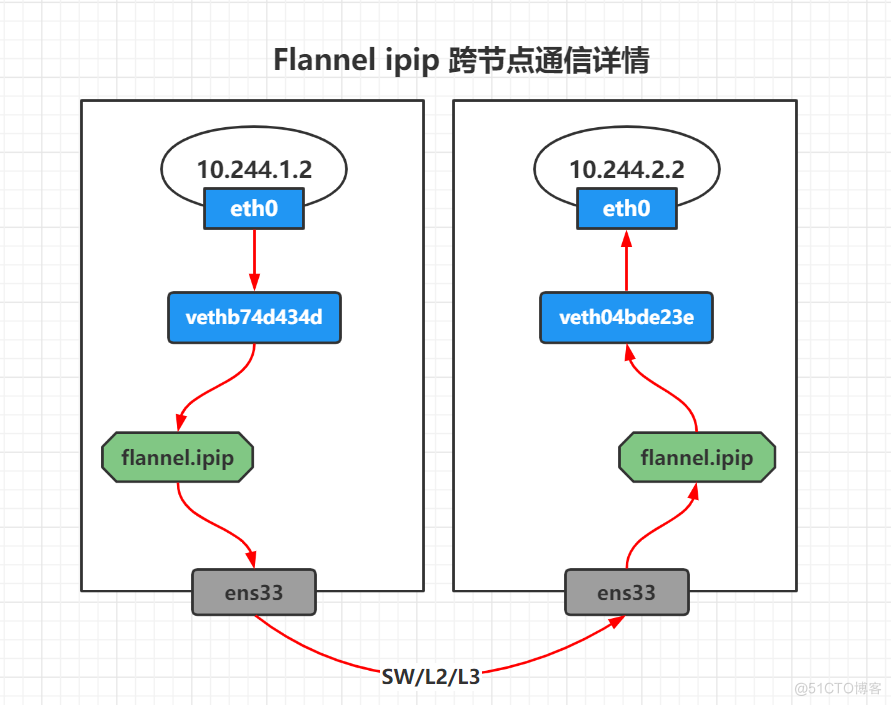

Flannel IPIP 跨节点通信

source link: https://blog.51cto.com/liujingyu/5360396

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

Flannel IPIP 跨节点通信

推荐 原创Flannel IPIP 模式安装部署

IPIP 类型的隧道是最简单的一种。它的开销最低,但只能封装 ipv4 单播通信,因此无法设置 OSPF、 RIP 或任何其他基于多播的协议。

部署 ipip 模式,只需要将 "Type": "vxlan" 更换为 "Type": "ipip"

如果需要在同一个二层类似于 host-gw 的效果,那么可以将 DirectRouting 配置为 true。

wget https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

sed -i "s/vxlan/ipip/g" kube-flannel.yml

sed -i "s/vxlan/ipip/g" kube-flannel.yml

跨节点通信

[root@master <sub>]# kubectl create deployment cni-test --image=burlyluo/nettoolbox --replicas=2

[root@master </sub>]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-test-777bbd57c8-jl2bh 0/1 ContainerCreating 0 5s <none> node1.whale.com <none> <none>

cni-test-777bbd57c8-p5576 0/1 ContainerCreating 0 5s <none> node2.whale.com <none> <none>

[root@master </sub>]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-test-777bbd57c8-jl2bh 0/1 ContainerCreating 0 5s <none> node1.whale.com <none> <none>

cni-test-777bbd57c8-p5576 0/1 ContainerCreating 0 5s <none> node2.whale.com <none> <none>

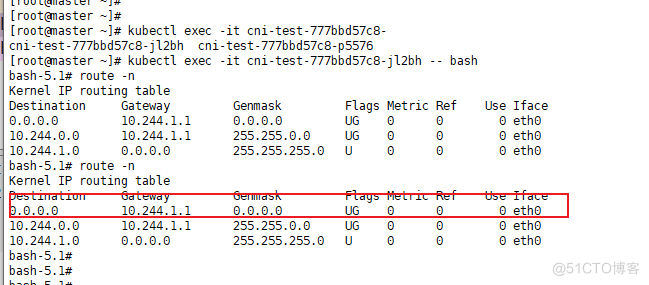

pod1 信息

[root@master <sub>]# kubectl exec -it cni-test-777bbd57c8-jl2bh -- bash

bash-5.1# ifconfig eth0

eth0 Link encap:Ethernet HWaddr EA:BC:22:E6:7A:FA

inet addr:10.244.1.2 Bcast:10.244.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1480 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1178 (1.1 KiB) TX bytes:42 (42.0 B)

bash-5.1# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.1.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.1.1 255.255.0.0 UG 0 0 0 eth0

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

bash-5.1# ethtool -S eth0

NIC statistics:

peer_ifindex: 7

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@node1 </sub>]# ip link show | grep ^7

7: vethb74d434d@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node1 <sub>]# ip link show vethb74d434d

7: vethb74d434d@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether fe:b1:c5:f4:d0:b2 brd ff:ff:ff:ff:ff:ff link-netnsid 0

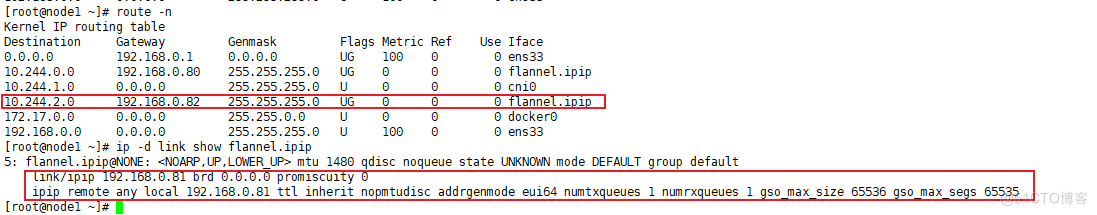

[root@node1 </sub>]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

10.244.2.0 192.168.0.82 255.255.255.0 UG 0 0 0 flannel.ipip

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

[root@node1 ~]# ip -d link show flannel.ipip

5: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/ipip 192.168.0.81 brd 0.0.0.0 promiscuity 0

ipip remote any local 192.168.0.81 ttl inherit nopmtudisc addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

bash-5.1# ifconfig eth0

eth0 Link encap:Ethernet HWaddr EA:BC:22:E6:7A:FA

inet addr:10.244.1.2 Bcast:10.244.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1480 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1178 (1.1 KiB) TX bytes:42 (42.0 B)

bash-5.1# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.1.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.1.1 255.255.0.0 UG 0 0 0 eth0

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

bash-5.1# ethtool -S eth0

NIC statistics:

peer_ifindex: 7

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@node1 </sub>]# ip link show | grep ^7

7: vethb74d434d@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node1 <sub>]# ip link show vethb74d434d

7: vethb74d434d@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether fe:b1:c5:f4:d0:b2 brd ff:ff:ff:ff:ff:ff link-netnsid 0

[root@node1 </sub>]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.1.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

10.244.2.0 192.168.0.82 255.255.255.0 UG 0 0 0 flannel.ipip

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

[root@node1 ~]# ip -d link show flannel.ipip

5: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/ipip 192.168.0.81 brd 0.0.0.0 promiscuity 0

ipip remote any local 192.168.0.81 ttl inherit nopmtudisc addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

pod2 信息

[root@master <sub>]# kubectl exec -it cni-test-777bbd57c8-p5576 -- bash

bash-5.1# ifconfig eth0

eth0 Link encap:Ethernet HWaddr 06:D6:FD:BF:4C:02

inet addr:10.244.2.2 Bcast:10.244.2.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1480 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1178 (1.1 KiB) TX bytes:42 (42.0 B)

bash-5.1# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.2.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.2.1 255.255.0.0 UG 0 0 0 eth0

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

bash-5.1# ethtool -S eth0

NIC statistics:

peer_ifindex: 7

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@node2 </sub>]# ip link show | grep ^7

7: veth04bde23e@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node2 <sub>]# ip link show veth04bde23e

7: veth04bde23e@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether 6e:67:70:7a:d2:51 brd ff:ff:ff:ff:ff:ff link-netnsid 0

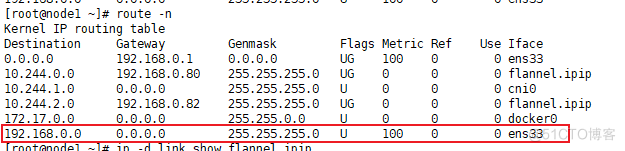

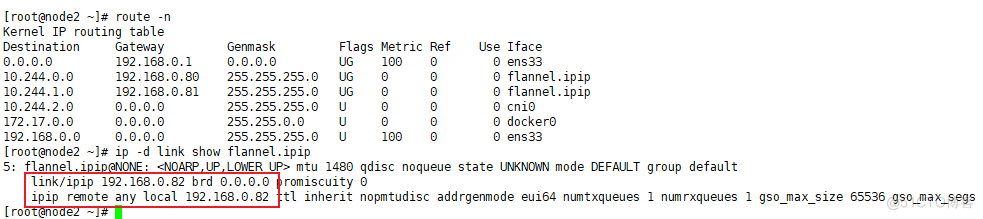

[root@node2 </sub>]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.1.0 192.168.0.81 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

[root@node2 ~]# ip -d link show flannel.ipip

5: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/ipip 192.168.0.82 brd 0.0.0.0 promiscuity 0

ipip remote any local 192.168.0.82 ttl inherit nopmtudisc addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

bash-5.1# ifconfig eth0

eth0 Link encap:Ethernet HWaddr 06:D6:FD:BF:4C:02

inet addr:10.244.2.2 Bcast:10.244.2.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1480 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:1 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1178 (1.1 KiB) TX bytes:42 (42.0 B)

bash-5.1# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.244.2.1 0.0.0.0 UG 0 0 0 eth0

10.244.0.0 10.244.2.1 255.255.0.0 UG 0 0 0 eth0

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

bash-5.1# ethtool -S eth0

NIC statistics:

peer_ifindex: 7

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_xdp_drops: 0

[root@node2 </sub>]# ip link show | grep ^7

7: veth04bde23e@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

[root@node2 <sub>]# ip link show veth04bde23e

7: veth04bde23e@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP mode DEFAULT group default

link/ether 6e:67:70:7a:d2:51 brd ff:ff:ff:ff:ff:ff link-netnsid 0

[root@node2 </sub>]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.1 0.0.0.0 UG 100 0 0 ens33

10.244.0.0 192.168.0.80 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.1.0 192.168.0.81 255.255.255.0 UG 0 0 0 flannel.ipip

10.244.2.0 0.0.0.0 255.255.255.0 U 0 0 0 cni0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 ens33

[root@node2 ~]# ip -d link show flannel.ipip

5: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/ipip 192.168.0.82 brd 0.0.0.0 promiscuity 0

ipip remote any local 192.168.0.82 ttl inherit nopmtudisc addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

跨节点通信数据流向图

pod1 10.244.1.2 node1

pod2 10.244.2.2 node2

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-jl2bh -- ping -c 1 10.244.2.2

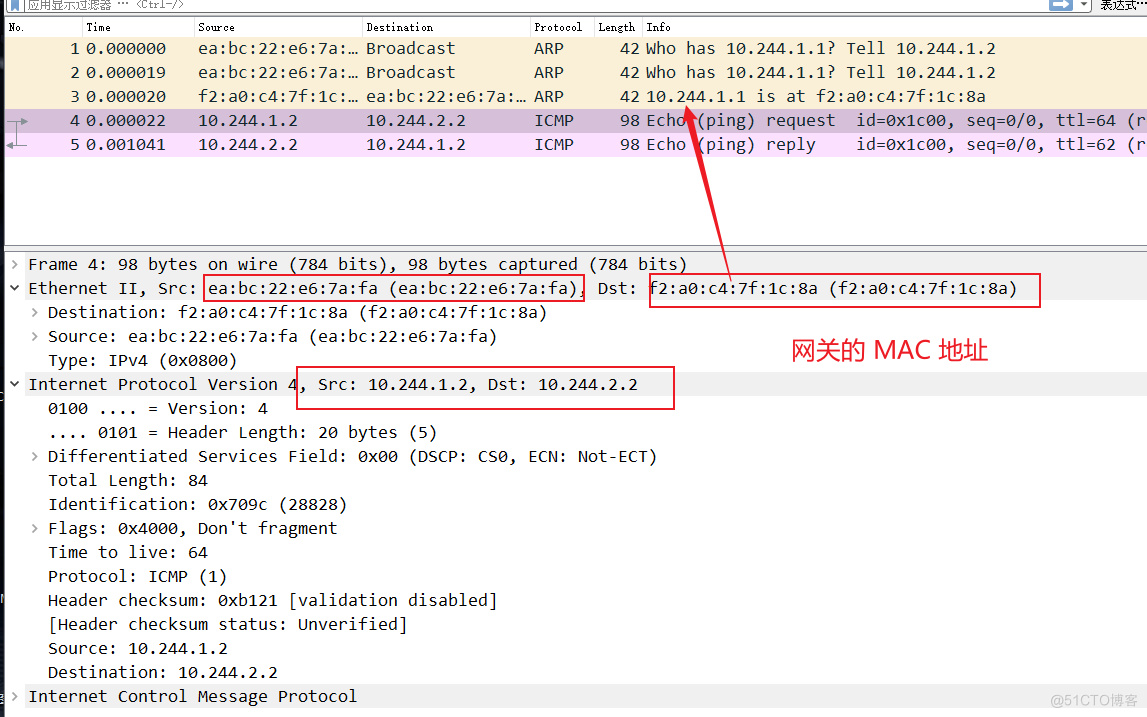

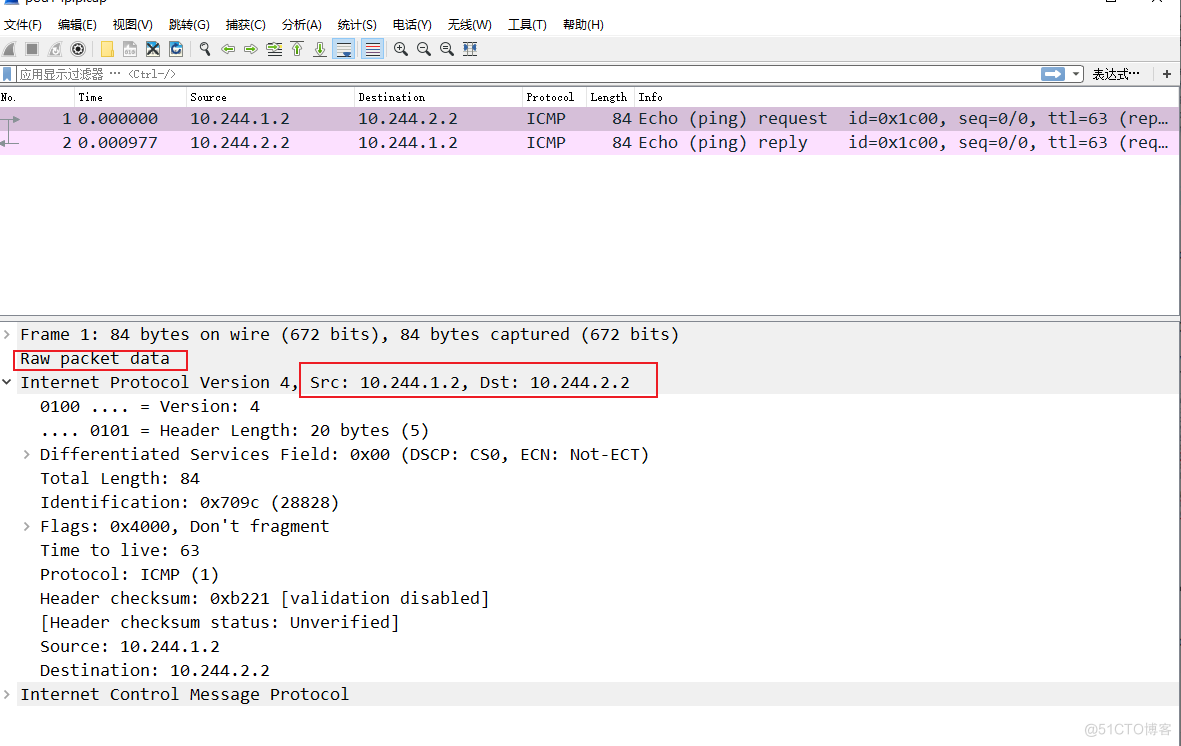

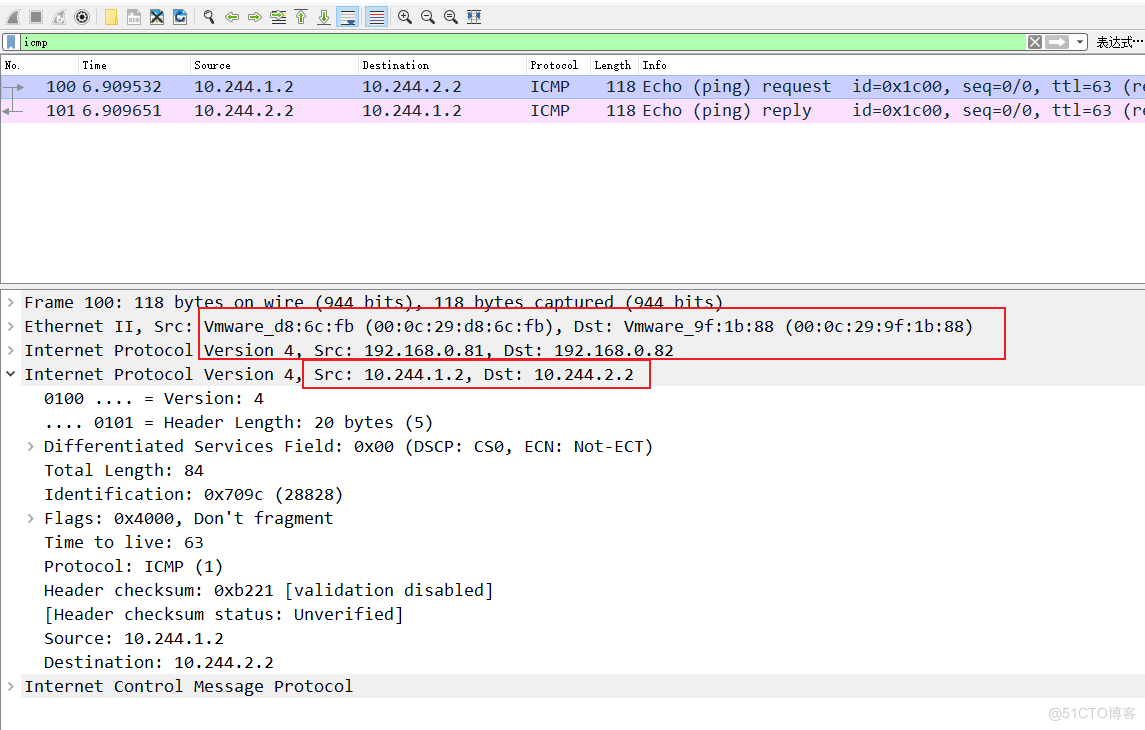

pod1.cap

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-jl2bh -- tcpdump -pne -i eth0 -w pod1.cap

通过 pod 内部的路由表,我们可以确定,需要走网关 10.244.1.1 出去

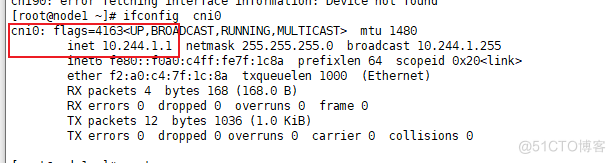

通过查看 node1 上的 cni0 的地址,确定了网关地址。

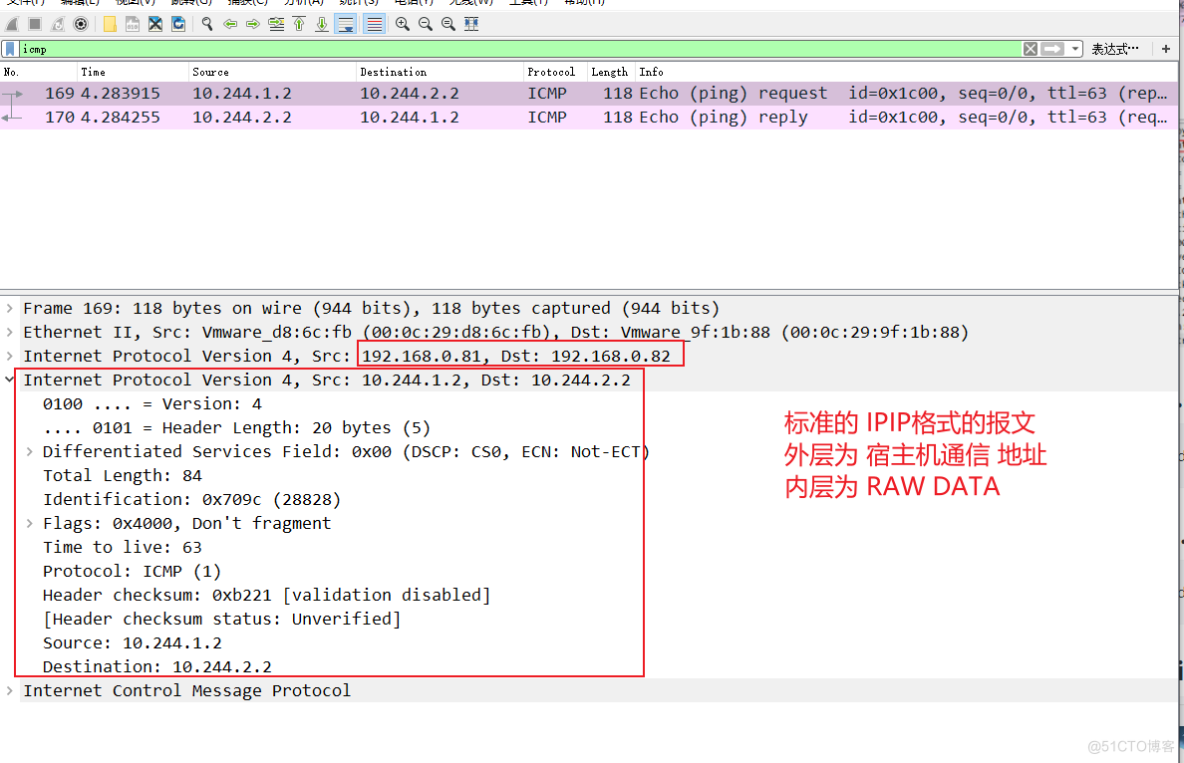

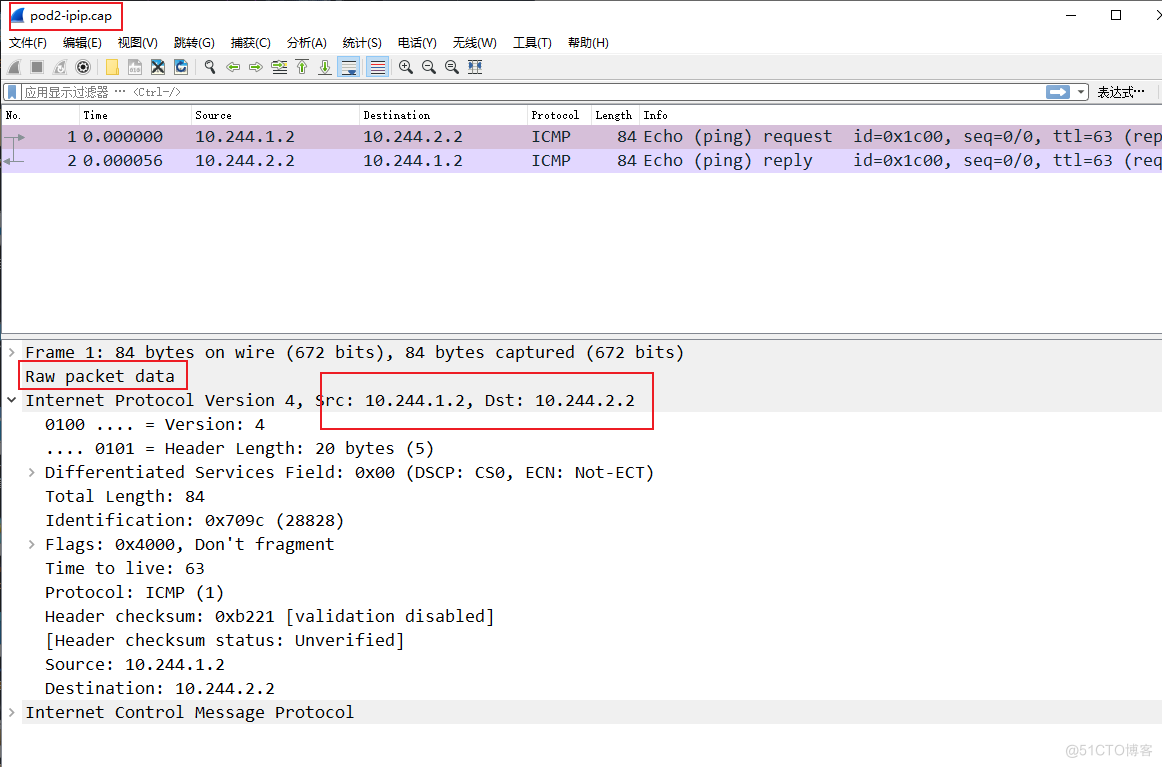

pod1-ipip.cap

[root@node1 ~]# tcpdump -pne -i flannel.ipip -w pod1-ipip.cap

通过对 node1 路由表的分析,去往 pod2 的路由均指向了 flannel.ipip 网卡

再次查看,网卡为 ipip 设备,remote 封装的地址也都有,但是只封装 IP,没有 MAC 地址,为 RAW DATA。

通过对报文的分析,也可以确认为 RAW DATA

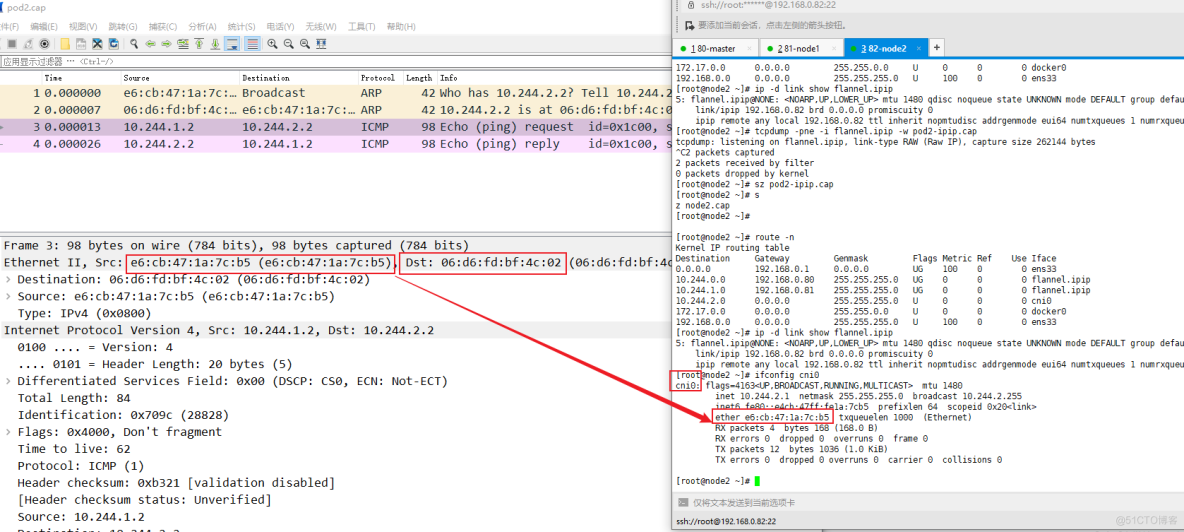

node1.cap

[root@node1 ~]# tcpdump -pne -i ens33 -w node1.cap

二层地址,直接 arp 就可以获取通信四元组

node2.cap

[root@node2 ~]# tcpdump -pne -i ens33 -w node2.cap

pod2-ipip.cap

[root@node2 ~]# tcpdump -pne -i flannel.ipip -w pod2-ipip.cap

pod2.cap

[root@master ~]# kubectl exec -it cni-test-777bbd57c8-p5576 -- tcpdump -pne -i eth0 -w pod2.cap

- 打赏

- 赞

- 收藏

- 评论

- 分享

- 举报

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK