【GMM】理论与实现

source link: https://www.guofei.site/2017/11/10/gmm.html

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

【GMM】理论与实现

2017年11月10日Author: Guofei

文章归类: 3-2-聚类 ,文章编号: 304

版权声明:本文作者是郭飞。转载随意,但需要标明原文链接,并通知本人

原文链接:https://www.guofei.site/2017/11/10/gmm.html

模型介绍

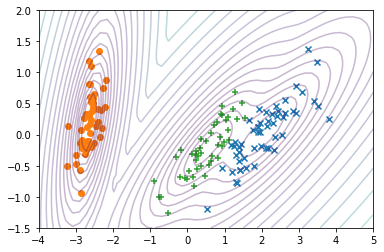

GMM(高斯混合模型,Gaussian misture model)是一个聚类模型,特点是可以得出概率值

P(y∣θ)=∑k=1Kαkϕ(y∣θ)P(y∣θ)=∑k=1Kαkϕ(y∣θ)

其中,∑kKαk=1∑kKαk=1

ϕ(y∣θk)=12π−−√σkexp(−(y−uk)22σ2k)ϕ(y∣θk)=12πσkexp(−(y−uk)22σk2)

参数计算

用MLE方法1

为求解MLE,引入EM算法2

Python实现

from sklearn.mixture import GaussianMixture

pca_scaled_data=PCA(n_components=2).fit_transform(data)

gm = GaussianMixture(n_components=3, n_init=3)

gm.fit(pca_scaled_data)

GaussianMixture(covariance_type=’full’, init_params=’kmeans’, max_iter=100, means_init=None, n_components=3, n_init=3, precisions_init=None, random_state=None, reg_covar=1e-06, tol=0.001, verbose=0, verbose_interval=10, warm_start=False, weights_init=None)

gm.predict(pca_scaled_data)

gm.predict_proba(pca_scaled_data)

gm.bic(pca_scaled_data)

参考资料

您的支持将鼓励我继续创作!

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK