#云原生征文#自建高可用k8s集群搭建

source link: https://blog.51cto.com/lansonli/5432903

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

自建高可用k8s集群搭建

一、所有节点基础环境

192.168.0.x : 为机器的网段

10.96.0.0/16: 为Service网段

196.16.0.0/16: 为Pod网段

1、环境准备与内核升级

先升级所有机器内核

cat /etc/redhat-release

# CentOS Linux release 7.9.2009 (Core)

#修改域名,一定不是localhost

hostnamectl set-hostname k8s-xxx

#集群规划

k8s-master1 k8s-master2 k8s-master3 k8s-master-lb k8s-node01 k8s-node02 ... k8s-nodeN

# 每个机器准备域名

vi /etc/hosts

192.168.0.10 k8s-master1

192.168.0.11 k8s-master2

192.168.0.12 k8s-master3

192.168.0.13 k8s-node1

192.168.0.14 k8s-node2

192.168.0.15 k8s-node3

192.168.0.250 k8s-master-lb # 非高可用,可以不用这个。这个使用keepalive配置

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

swapoff -a && sysctl -w vm.swappiness=0

sed -ri 's/.*swap.*/#&/' /etc/fstab

ulimit -SHn 65535

vi /etc/security/limits.conf

# 末尾添加如下内容

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

ssh-keygen -t rsa

for i in k8s-master1 k8s-master2 k8s-master3 k8s-node1 k8s-node2 k8s-node3;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

yum install wget git jq psmisc net-tools yum-utils device-mapper-persistent-data lvm2 -y

# 安装ipvs工具,方便以后操作ipvs,ipset,conntrack等

yum install ipvsadm ipset sysstat conntrack libseccomp -y

# 所有节点配置ipvs模块,执行以下命令,在内核4.19+版本改为nf_conntrack, 4.18下改为nf_conntrack_ipv4

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

#修改ipvs配置,加入以下内容

vi /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

# 执行命令

systemctl enable --now systemd-modules-load.service #--now = enable+start

#检测是否加载

lsmod | grep -e ip_vs -e nf_conntrack

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

net.ipv4.conf.all.route_localnet = 1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16768

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16768

EOF

sysctl --system

reboot

lsmod | grep -e ip_vs -e nf_conntrack

2、安装Docker

yum remove docker*

yum install -y yum-utils

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce-19.03.9 docker-ce-cli-19.03.9 containerd.io-1.4.4

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://82m9ayutr63.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload && systemctl enable --now docker

http://mirrors.aliyun.com/docker-ce/linux/centos/7.9/x86_64/stable/Packages/

yum localinstall xxxx

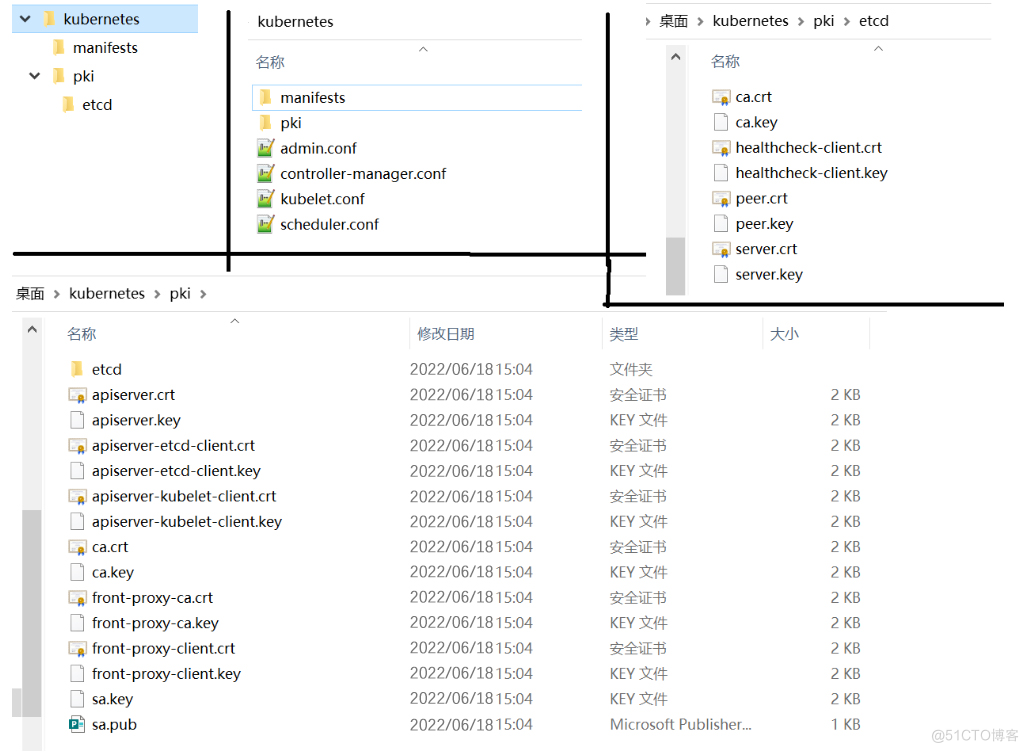

二、PKI

百度百科: 公钥基础设施_百度百科

Kubernetes 需要 PKI 才能执行以下操作:

- Kubelet 的客户端证书,用于 API 服务器身份验证

- API 服务器端点的证书

- 集群管理员的客户端证书,用于 API 服务器身份认证

- API 服务器的客户端证书,用于和 Kubelet 的会话

- API 服务器的客户端证书,用于和 etcd 的会话

- 控制器管理器的客户端证书/kubeconfig,用于和 API 服务器的会话

- 调度器的客户端证书/kubeconfig,用于和 API 服务器的会话

- 前端代理的客户端及服务端证书

说明: 只有当你运行 kube-proxy 并要支持扩展 API 服务器 时,才需要

front-proxy 证书

etcd 还实现了双向 TLS 来对客户端和对其他对等节点进行身份验证

三、证书工具准备

# 三个节点都执行

mkdir -p /etc/kubernetes/pki

1、下载证书工具

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl-certinfo_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssljson_1.5.0_linux_amd64

#授予执行权限

chmod +x cfssl*

#批量重命名

for name in `ls cfssl*`; do mv $name ${name%_1.5.0_linux_amd64}; done

#移动到文件

mv cfssl* /usr/bin

2、ca根配置

ca-config.json

cd /etc/kubernetes/pki

vi ca-config.json

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"server": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

},

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

},

"etcd": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

3、ca签名请求

CSR是Certificate Signing Request的英文缩写,即证书签名请求文件

ca-csr.json

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes"

}

],

"ca": {

"expiry": "87600h"

}

}

- CN(Common Name):

- 公用名(Common Name)必须填写,一般可以是网站域

- O(Organization):

- Organization(组织名)是必须填写的,如果申请的是OV、EV型证书,组织名称必须严格和企业在政府登记名称一致,一般需要和营业执照上的名称完全一致。不可以使用缩写或者商标。如果需要使用英文名称,需要有DUNS编码或者律师信证明。

- OU(Organization Unit)

- OU单位部门,这里一般没有太多限制,可以直接填写IT DEPT等皆可。

- C(City)

- City是指申请单位所在的城市。

- ST(State/Province)

- ST是指申请单位所在的省份。

- C(Country Name)

- C是指国家名称,这里用的是两位大写的国家代码,中国是CN。

4、生成证书

生成ca证书和私钥

# ca.csr ca.pem(ca公钥) ca-key.pem(ca私钥,妥善保管)

5、k8s集群是如何使用证书的

参考官方文档: PKI 证书和要求 | Kubernetes

四、etcd高可用搭建

1、etcd文档

etcd示例: Demo | etcd 参照示例学习etcd使用

etcd构建: Install | etcd 参照etcd-k8s集群量规划指南,大家参照这个标准建立集群

etcd部署: Operations guide | etcd 参照部署手册,学习etcd配置和集群部署

2、下载etcd

wget https://github.com/etcd-io/etcd/releases/download/v3.4.16/etcd-v3.4.16-linux-amd64.tar.gz

## 复制到其他节点

for i in k8s-master1 k8s-master2 k8s-master3;do scp etcd-* root@$i:/root/;done

## 解压到 /usr/local/bin

tar -zxvf etcd-v3.4.16-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.4.16-linux-amd64/etcd{,ctl}

##验证

etcdctl #只要有打印就ok

3、etcd证书

Hardware recommendations | etcd安装参考 : Hardware recommendations | etcd

生成etcd证书

etcd-ca-csr.json

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "etcd"

}

],

"ca": {

"expiry": "87600h"

}

}

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/etcd/ca -

etcd-itdachang-csr.json

"CN": "etcd-itdachang",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"k8s-master1",

"k8s-master2",

"k8s-master3",

"192.168.0.10",

"192.168.0.11",

"192.168.0.12"

],

"names": [

{

"C": "CN",

"L": "beijing",

"O": "etcd",

"ST": "beijing",

"OU": "System"

}

]

}

// 注意:hosts用自己的主机名和ip

// 也可以在签发的时候再加上 -hostname=127.0.0.1,k8s-master1,k8s-master2,k8s-master3,

// 可以指定受信的主机列表

// "hosts": [

// "k8s-master1",

// "www.example.net"

// ],

cfssl gencert \

-ca=/etc/kubernetes/pki/etcd/ca.pem \

-ca-key=/etc/kubernetes/pki/etcd/ca-key.pem \

-config=/etc/kubernetes/pki/ca-config.json \

-profile=etcd \

etcd-itdachang-csr.json | cfssljson -bare /etc/kubernetes/pki/etcd/etcd

for i in k8s-master2 k8s-master3;do scp -r /etc/kubernetes/pki/etcd root@$i:/etc/kubernetes/pki;done

4、etcd高可用安装

etcd配置文件示例: Configuration flags | etcd

etcd高可用安装示例: Clustering Guide | etcd

为了保证启动配置一致性,我们编写etcd配置文件,并将etcd做成service启动

# This is the configuration file for the etcd server.

# Human-readable name for this member.

name: 'default'

# Path to the data directory.

data-dir:

# Path to the dedicated wal directory.

wal-dir:

# Number of committed transactions to trigger a snapshot to disk.

snapshot-count: 10000

# Time (in milliseconds) of a heartbeat interval.

heartbeat-interval: 100

# Time (in milliseconds) for an election to timeout.

election-timeout: 1000

# Raise alarms when backend size exceeds the given quota. 0 means use the

# default quota.

quota-backend-bytes: 0

# List of comma separated URLs to listen on for peer traffic.

listen-peer-urls: http://localhost:2380

# List of comma separated URLs to listen on for client traffic.

listen-client-urls: http://localhost:2379

# Maximum number of snapshot files to retain (0 is unlimited).

max-snapshots: 5

# Maximum number of wal files to retain (0 is unlimited).

max-wals: 5

# Comma-separated white list of origins for CORS (cross-origin resource sharing).

cors:

# List of this member's peer URLs to advertise to the rest of the cluster.

# The URLs needed to be a comma-separated list.

initial-advertise-peer-urls: http://localhost:2380

# List of this member's client URLs to advertise to the public.

# The URLs needed to be a comma-separated list.

advertise-client-urls: http://localhost:2379

# Discovery URL used to bootstrap the cluster.

discovery:

# Valid values include 'exit', 'proxy'

discovery-fallback: 'proxy'

# HTTP proxy to use for traffic to discovery service.

discovery-proxy:

# DNS domain used to bootstrap initial cluster.

discovery-srv:

# Initial cluster configuration for bootstrapping.

initial-cluster:

# Initial cluster token for the etcd cluster during bootstrap.

initial-cluster-token: 'etcd-cluster'

# Initial cluster state ('new' or 'existing').

initial-cluster-state: 'new'

# Reject reconfiguration requests that would cause quorum loss.

strict-reconfig-check: false

# Accept etcd V2 client requests

enable-v2: true

# Enable runtime profiling data via HTTP server

enable-pprof: true

# Valid values include 'on', 'readonly', 'off'

proxy: 'off'

# Time (in milliseconds) an endpoint will be held in a failed state.

proxy-failure-wait: 5000

# Time (in milliseconds) of the endpoints refresh interval.

proxy-refresh-interval: 30000

# Time (in milliseconds) for a dial to timeout.

proxy-dial-timeout: 1000

# Time (in milliseconds) for a write to timeout.

proxy-write-timeout: 5000

# Time (in milliseconds) for a read to timeout.

proxy-read-timeout: 0

client-transport-security:

# Path to the client server TLS cert file.

cert-file:

# Path to the client server TLS key file.

key-file:

# Enable client cert authentication.

client-cert-auth: false

# Path to the client server TLS trusted CA cert file.

trusted-ca-file:

# Client TLS using generated certificates

auto-tls: false

peer-transport-security:

# Path to the peer server TLS cert file.

cert-file:

# Path to the peer server TLS key file.

key-file:

# Enable peer client cert authentication.

client-cert-auth: false

# Path to the peer server TLS trusted CA cert file.

trusted-ca-file:

# Peer TLS using generated certificates.

auto-tls: false

# Enable debug-level logging for etcd.

debug: false

logger: zap

# Specify 'stdout' or 'stderr' to skip journald logging even when running under systemd.

log-outputs: [stderr]

# Force to create a new one member cluster.

force-new-cluster: false

auto-compaction-mode: periodic

auto-compaction-retention: "1"

三个etcd机器都创建 /etc/etcd 目录,准备存储etcd配置信息

mkdir -p /etc/etcd

name: 'etcd-master3' #每个机器可以写自己的域名,不能重复

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.0.12:2380' # 本机ip+2380端口,代表和集群通信

listen-client-urls: 'https://192.168.0.12:2379,http://127.0.0.1:2379' #改为自己的

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.0.12:2380' #自己的ip

advertise-client-urls: 'https://192.168.0.12:2379' #自己的ip

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'etcd-master1=https://192.168.0.10:2380,etcd-master2=https://192.168.0.11:2380,etcd-master3=https://192.168.0.12:2380' #这里不一样

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

三台机器的etcd做成service,开机启动

[Unit]

Description=Etcd Service

Documentation=https://etcd.io/docs/v3.4/op-guide/clustering/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.yaml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

systemctl daemon-reload

systemctl enable --now etcd

# 启动有问题,使用 journalctl -u 服务名排查

journalctl -u etcd

测试etcd访问

etcdctl --endpoints="192.168.0.10:2379,192.168.0.11:2379,192.168.0.12:2379" --cacert=/etc/kubernetes/pki/etcd/ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

# 以后测试命令

export ETCDCTL_API=3

HOST_1=192.168.0.10

HOST_2=192.168.0.11

HOST_3=192.168.0.12

ENDPOINTS=$HOST_1:2379,$HOST_2:2379,$HOST_3:2379

## 导出环境变量,方便测试,参照https://github.com/etcd-io/etcd/tree/main/etcdctl

export ETCDCTL_DIAL_TIMEOUT=3s

export ETCDCTL_CACERT=/etc/kubernetes/pki/etcd/ca.pem

export ETCDCTL_CERT=/etc/kubernetes/pki/etcd/etcd.pem

export ETCDCTL_KEY=/etc/kubernetes/pki/etcd/etcd-key.pem

export ETCDCTL_ENDPOINTS=$HOST_1:2379,$HOST_2:2379,$HOST_3:2379

# 自动用环境变量定义的证书位置

etcdctl member list --write-out=table

#如果没有环境变量就需要如下方式调用

etcdctl --endpoints=$ENDPOINTS --cacert=/etc/kubernetes/pki/etcd/ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem member list --write-out=table

## 更多etcdctl命令,https://etcd.io/docs/v3.4/demo/#access-etcd

五、k8s组件与证书

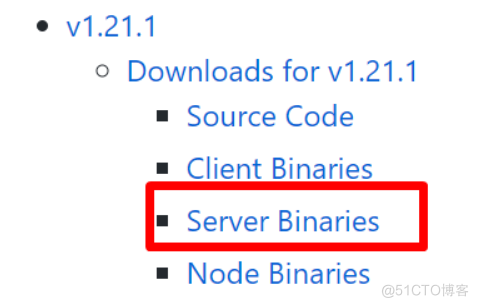

1、K8s离线安装包

https://github.com/kubernetes/kubernetes 找到changelog对应版本

wget https://dl.k8s.io/v1.21.1/kubernetes-server-linux-amd64.tar.gz

2、master节点准备

for i in k8s-master1 k8s-master2 k8s-master3 k8s-node1 k8s-node2 k8s-node3;do scp kubernetes-server-* root@$i:/root/;done

tar -xvf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

#master需要全部组件,node节点只需要 /usr/local/bin kubelet、kube-proxy

3、apiserver 证书生成

3.1、apiserver-csr.json

// 192.168.0.250: 是我准备的负载均衡器地址(负载均衡可以自己搭建,也可以购买云厂商lb。)

{

"CN": "kube-apiserver",

"hosts": [

"10.96.0.1",

"127.0.0.1",

"192.168.0.250",

"192.168.0.10",

"192.168.0.11",

"192.168.0.12",

"192.168.0.13",

"192.168.0.14",

"192.168.0.15",

"192.168.0.16",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "Kubernetes",

"OU": "Kubernetes"

}

]

}

3.2、生成apiserver证书

# 如果不是高可用集群,10.103.236.236为Master01的IP

#先生成CA机构

vi ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes"

}

],

"ca": {

"expiry": "87600h"

}

}

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=/etc/kubernetes/pki/ca-config.json -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

4、front-proxy证书生成

官方文档: 配置聚合层 | Kubernetes

注意:front-proxy不建议用新的CA机构签发证书,可能导致通过他代理的组件如metrics-server权限不可用。

如果用新的,api-server配置添加 --requestheader-allowed-names=front-proxy-client

4.1、front-proxy-ca-csr.json

front-proxy根ca

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

}

}

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

4.2、front-proxy-client证书

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

#忽略警告,毕竟我们不是给网站生成的

5、controller-manage证书生成与配置

5.1、controller-manager-csr.json

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes"

}

]

}

5.2、生成证书

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

controller-manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

5.3、生成配置

# set-cluster:设置一个集群项,

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.0.250:6443 \

--kubeconfig=/etc/kubernetes/controller-manager.conf

# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/controller-manager.conf

# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/controller-manager.pem \

--client-key=/etc/kubernetes/pki/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/controller-manager.conf

# 使用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/etc/kubernetes/controller-manager.conf

# 后来也用来自动批复kubelet证书

6、scheduler证书生成与配置

6.1、scheduler-csr.json

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes"

}

]

}

6.2、签发证书

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/pki/ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

6.3、生成配置

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.0.250:6443 \

--kubeconfig=/etc/kubernetes/scheduler.conf

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/scheduler.pem \

--client-key=/etc/kubernetes/pki/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/scheduler.conf

kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/scheduler.conf

kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/etc/kubernetes/scheduler.conf

#k8s集群安全操作相关

7、admin证书生成与配置

7.1、admin-csr.json

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes"

}

]

}

7.2、生成证书

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/pki/ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

7.3、生成配置

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.0.250:6443 \

--kubeconfig=/etc/kubernetes/admin.conf

kubectl config set-credentials kubernetes-admin \

--client-certificate=/etc/kubernetes/pki/admin.pem \

--client-key=/etc/kubernetes/pki/admin-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/admin.conf

kubectl config set-context kubernetes-admin@kubernetes \

--cluster=kubernetes \

--user=kubernetes-admin \

--kubeconfig=/etc/kubernetes/admin.conf

kubectl config use-context kubernetes-admin@kubernetes \

--kubeconfig=/etc/kubernetes/admin.conf

kubelet将使用 bootstrap 引导机制,自动颁发证书,所以我们不用配置了。要不然,1万台机器,一个万kubelet,证书配置到明年去。。。

8、ServiceAccount Key生成

k8s底层,每创建一个ServiceAccount,都会分配一个Secret,而Secret里面有秘钥,秘钥就是由我们接下来的sa生成的。所以我们提前创建出sa信息

openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

9、发送证书到其他节点

for NODE in k8s-master2 k8s-master3

do

for FILE in admin.conf controller-manager.conf scheduler.conf

do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE}

done

done

六、高可用配置

- 高可用配置

- 如果你不是在创建高可用集群,则无需配置haproxy和keepalived

- 高可用有很多可选方案

- nginx

- haproxy

- keepalived

- 云供应商提供的负载均衡产品

- 云上安装注意事项

- 云上安装可以直接使用云上的lb,比如阿里云slb,腾讯云elb等

- 公有云要用公有云自带的负载均衡,比如阿里云的SLB,腾讯云的ELB,用来替代haproxy和keepalived,因为公有云大部分都是不支持keepalived的。

- 阿里云的话,kubectl控制端不能放在master节点,推荐使用腾讯云,因为阿里云的slb有回环的问题,也就是slb代理的服务器不能反向访问SLB,但是腾讯云修复了这个问题。

- 创建负载均衡器,指定ip地址为我们之前的预留地址

- 进入负载均衡器,创建监听器

- 选择TCP,6443端口

- 添加后端服务器地址与端口

七、组件启动

1、所有master执行

#三个master节点kube-xx相关的程序都在 /usr/local/bin

for NODE in k8s-master2 k8s-master3

do

scp -r /etc/kubernetes/* root@$NODE:/etc/kubernetes/

done

接下来把master1生成的所有证书全部发给master2,master3

2、配置apiserver服务

2.1、配置

所有Master节点创建kube-apiserver.service

注意,如果不是高可用集群,192.168.0.250改为master01的地址

以下文档使用的k8s service网段为

10.96.0.0/16,该网段不能和宿主机的网段、Pod网段的重复特别注意:docker的网桥默认为

172.17.0.1/16。不要使用这个网段

# --advertise-address: 需要改为本master节点的ip

# --service-cluster-ip-range=10.96.0.0/16: 需要改为自己规划的service网段

# --etcd-servers: 改为自己etcd-server的所有地址

vi /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--logtostderr=true \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--insecure-port=0 \

--advertise-address=192.168.0.10 \

--service-cluster-ip-range=10.96.0.0/16 \

--service-node-port-range=30000-32767 \

--etcd-servers=https://192.168.0.10:2379,https://192.168.0.11:2379,https://192.168.0.12:2379 \

--etcd-cafile=/etc/kubernetes/pki/etcd/ca.pem \

--etcd-certfile=/etc/kubernetes/pki/etcd/etcd.pem \

--etcd-keyfile=/etc/kubernetes/pki/etcd/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator,front-proxy-client \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

2.2、启动apiserver服务

#查看状态

systemctl status kube-apiserver

3、配置controller-manager服务

3.1、配置

所有Master节点配置kube-controller-manager.service

文档使用的k8s Pod网段为

196.16.0.0/16,该网段不能和宿主机的网段、k8s Service网段的重复,请按需修改;特别注意:docker的网桥默认为

172.17.0.1/16。不要使用这个网

vi /usr/lib/systemd/system/kube-controller-manager.service

## --cluster-cidr=196.16.0.0/16 : 为Pod的网段。修改成自己想规划的网段

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--logtostderr=true \

--address=127.0.0.1 \

--root-ca-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \

--service-account-private-key-file=/etc/kubernetes/pki/sa.key \

--kubeconfig=/etc/kubernetes/controller-manager.conf \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--pod-eviction-timeout=2m0s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=196.16.0.0/16 \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

3.2、启动

systemctl daemon-reload

systemctl daemon-reload && systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

4、配置scheduler

4.1、配置

所有Master节点配置kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--logtostderr=true \

--address=127.0.0.1 \

--leader-elect=true \

--kubeconfig=/etc/kubernetes/scheduler.conf

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

4.2、启动

systemctl daemon-reload && systemctl enable --now kube-scheduler

systemctl status kube-scheduler

八、TLS与引导启动原理

1、master1配置bootstrap

注意,如果不是高可用集群,

192.168.0.250:6443改为master1的地址,6443为apiserver的默认端口

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

# 值如下: 737b177d9823531a433e368fcdb16f5f

# 生成16个字符的

head -c 8 /dev/urandom | od -An -t x | tr -d ' '

# d683399b7a553977

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.0.250:6443 \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf

#设置秘钥

kubectl config set-credentials tls-bootstrap-token-user \

--token=l6fy8c.d683399b7a553977 \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf

#设置上下文

kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=kubernetes \

--user=tls-bootstrap-token-user \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf

#使用设置

kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf

2、master1设置kubectl执行权限

kubectl 能不能操作集群是看 /root/.kube 下有没有config文件,而config就是我们之前生成的admin.conf,具有操作权限的

mkdir -p /root/.kube ;

cp /etc/kubernetes/admin.conf /root/.kube/config

kubectl get nodes

# 应该在网络里面开放负载均衡器的6443端口;默认应该不要配置的

[root@k8s-master1 ~]# kubectl get nodes

No resources found

#说明已经可以连接apiserver并获取资源

3、创建集群引导权限文件

vi /etc/kubernetes/bootstrap.secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token-l6fy8c

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: l6fy8c

token-secret: d683399b7a553977

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

kubectl create -f /etc/kubernetes/bootstrap.secret.yaml

九、引导Node节点启动

所有节点的kubelet需要我们引导启动

1、发送核心证书到节点

master1节点把核心证书发送到其他节点

#执行复制所有令牌操作

for NODE in k8s-master2 k8s-master3 k8s-node1 k8s-node2; do

ssh $NODE mkdir -p /etc/kubernetes/pki/etcd

for FILE in ca.pem etcd.pem etcd-key.pem; do

scp /etc/kubernetes/pki/etcd/$FILE $NODE:/etc/kubernetes/pki/etcd/

done

for FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.conf; do

scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}

done

done

2、所有节点配置kubelet

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

## 所有node节点必须有 kubelet kube-proxy

for NODE in k8s-master2 k8s-master3 k8s-node3 k8s-node1 k8s-node2; do

scp -r /etc/kubernetes/* root@$NODE:/etc/kubernetes/

done

2.1、创建kubelet.service

vi /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

vi /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf"

Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/pause:3.4.1"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

2.2、创建kubelet-conf.yml文件

vi /etc/kubernetes/kubelet-conf.yml

# clusterDNS 为service网络的第10个ip值,改成自己的。如:10.96.0.10

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s #缩小相应的配置

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

2.3、所有节点启动kubelet

systemctl status kubelet

会提示 "Unable to update cni config"。

接下来配置cni网络即可

3、kube-proxy配置

注意,如果不是高可用集群,

192.168.0.250:6443改为master1的地址,6443改为apiserver的默认端口

3.1、生成kube-proxy.conf

以下操作在master1执行

kubectl -n kube-system create serviceaccount kube-proxy

#创建角色绑定

kubectl create clusterrolebinding system:kube-proxy \

--clusterrole system:node-proxier \

--serviceaccount kube-system:kube-proxy

#导出变量,方便后面使用

SECRET=$(kubectl -n kube-system get sa/kube-proxy --output=jsonpath='{.secrets[0].name}')

JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET --output=jsonpath='{.data.token}' | base64 -d)

PKI_DIR=/etc/kubernetes/pki

K8S_DIR=/etc/kubernetes

# 生成kube-proxy配置

# --server: 指定自己的apiserver地址或者lb地址

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.0.250:6443 \

--kubeconfig=${K8S_DIR}/kube-proxy.conf

# kube-proxy秘钥设置

kubectl config set-credentials kubernetes \

--token=${JWT_TOKEN} \

--kubeconfig=/etc/kubernetes/kube-proxy.conf

kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=kubernetes \

--kubeconfig=/etc/kubernetes/kube-proxy.conf

kubectl config use-context kubernetes \

--kubeconfig=/etc/kubernetes/kube-proxy.conf

for NODE in k8s-master2 k8s-master3 k8s-node1 k8s-node2 k8s-node3; do

scp /etc/kubernetes/kube-proxy.conf $NODE:/etc/kubernetes/

done

3.2、配置kube-proxy.service

vi /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

3.3、准备kube-proxy.yaml

一定注意修改自己的Pod网段范围

vi /etc/kubernetes/kube-proxy.yaml

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /etc/kubernetes/kube-proxy.conf #kube-proxy引导文件

qps: 5

clusterCIDR: 196.16.0.0/16 #修改为自己的Pod-CIDR

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

3.4、启动kube-proxy

所有节点启动

systemctl status kube-proxy

十、部署calico

可以参照calico私有云部署指南

curl https://docs.projectcalico.org/manifests/calico-etcd.yaml -o calico.yaml

## 把这个镜像修改成国内镜像

# 修改一些我们自定义的. 修改etcd集群地址

sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://192.168.0.10:2379,https://192.168.0.11:2379,https://192.168.0.12:2379"#g' calico.yaml

# etcd的证书内容,需要base64编码设置到yaml中

ETCD_CA=`cat /etc/kubernetes/pki/etcd/ca.pem | base64 -w 0 `

ETCD_CERT=`cat /etc/kubernetes/pki/etcd/etcd.pem | base64 -w 0 `

ETCD_KEY=`cat /etc/kubernetes/pki/etcd/etcd-key.pem | base64 -w 0 `

# 替换etcd中的证书base64编码后的内容

sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico.yaml

#打开 etcd_ca 等默认设置(calico启动后自己生成)。

sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico.yaml

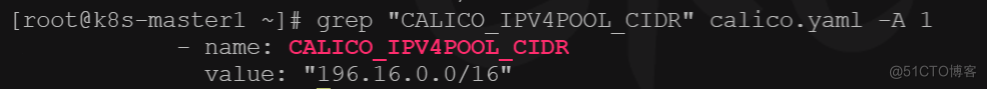

# 修改自己的Pod网段 196.16.0.0/16

POD_SUBNET="196.16.0.0/16"

sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@ value: '"${POD_SUBNET}"'@g' calico.yaml

# 一定确定自己是否修改好了

#确认calico是否修改好

grep "CALICO_IPV4POOL_CIDR" calico.yaml -A 1

kubectl apply -f calico.yaml

十一、部署coreDNS

cd deployment/kubernetes

#10.96.0.10 改为 service 网段的 第 10 个ip

./deploy.sh -s -i 10.96.0.10 | kubectl apply -f -

十二、给机器打上role标签

kubectl label node k8s-master2 node-role.kubernetes.io/master=''

kubectl label node k8s-master3 node-role.kubernetes.io/master=''

kubectl taints node k8s-master1

十三、集群验证

- 验证Pod网络可访问性

- 同名称空间,不同名称空间可以使用 ip 互相访问

- 跨机器部署的Pod也可以互相访问

- 验证Service网络可访问性

- 集群机器使用serviceIp可以负载均衡访问

- pod内部可以访问service域名 serviceName.namespace

- pod可以访问跨名称空间的service

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-01

namespace: default

labels:

app: nginx-01

spec:

selector:

matchLabels:

app: nginx-01

replicas: 1

template:

metadata:

labels:

app: nginx-01

spec:

containers:

- name: nginx-01

image: nginx

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

namespace: default

spec:

selector:

app: nginx-01

type: ClusterIP

ports:

- name: nginx-svc

port: 80

targetPort: 80

protocol: TCP

---

apiVersion: v1

kind: Namespace

metadata:

name: hello

spec: {}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-hello

namespace: hello

labels:

app: nginx-hello

spec:

selector:

matchLabels:

app: nginx-hello

replicas: 1

template:

metadata:

labels:

app: nginx-hello

spec:

containers:

- name: nginx-hello

image: nginx

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc-hello

namespace: hello

spec:

selector:

app: nginx-hello

type: ClusterIP

ports:

- name: nginx-svc-hello

port: 80

targetPort: 80

protocol: TCP

kubectl label node k8s-node3 node-role.kubernetes.io/worker=''

kubectl label node k8s-master3 node-role.kubernetes.io/worker=''

kubectl label node k8s-node1 node-role.kubernetes.io/worker=''

kubectl label node k8s-node2 node-role.kubernetes.io/worker=''

# 给master1打上污点。二进制部署的集群,默认master是没有污点的,可以任意调度。我们最好给一个master打上污点,保证master最小可用

kubectl label node k8s-master3 node-role.kubernetes.io/master=''

kubectl taint nodes k8s-master1 node-role.kubernetes.io/master=:NoSchedule

【本文正在参加云原生有奖征文活动】,活动链接:https://ost.51cto.com/posts/12598

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK