Spring Cloud Stream and Apache Kafka based Microservices on Oracle Cloud

source link: https://medium.com/oracledevs/spring-cloud-stream-and-kafka-based-microservices-on-oracle-cloud-9889732149a

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

Spring Cloud Stream and Apache Kafka based Microservices on Oracle Cloud

This blog demonstrates how to run Spring Cloud Stream applications on top of Oracle Cloud

Communication between distributed applications in a microservices based architecture can be largely classfied into two categories

- synchronous — RPC using HTTP (e.g. REST) or any other protocol (e.g. avro, thrift etc.)

- asynchronous — message based

The sample application in this blog consists of producer and consumer applications which communicate in a message driven (asynchronous) fashion

- Built using Spring Boot, Spring Cloud Stream

- They use Oracle Event Hub Cloud (managed Apache Kafka) as the intermediate broker (messaging middleware)

- They are deployed on Oracle Application Container Cloud — the producer app being a traditional internet facing web app and the consumer app is modeled as a worker service. It also leverages the Service Binding capability to Oracle Event Hub Cloud

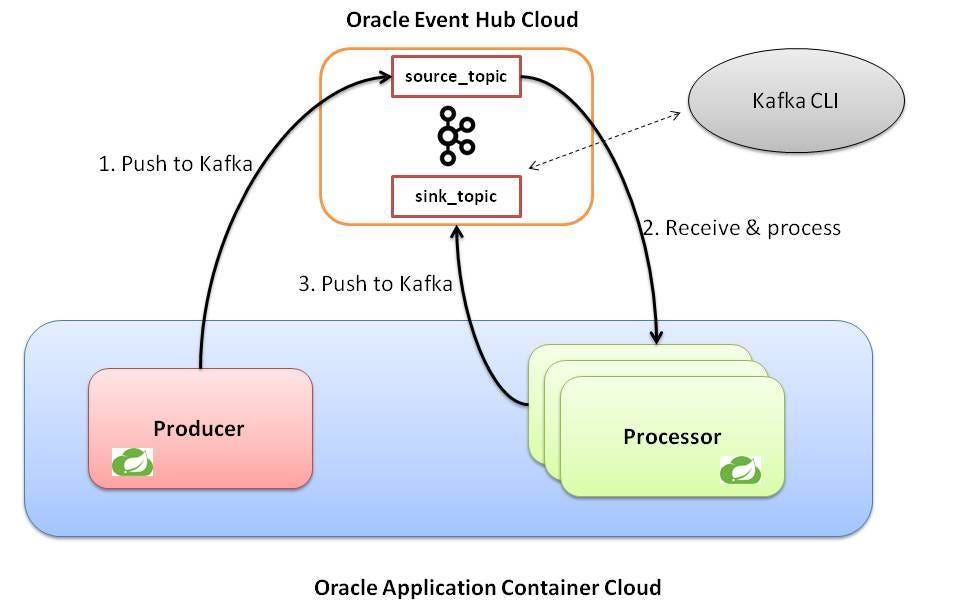

Application overview

The application itself is relatively simple. In order to set the context, here is a summary of what’s going on

- the producer application exposes a REST endpoint to receive messages

- once the client sends a message (using HTTP POST), the producer application pushes it to a Kafka topic (in Oracle Event Hub Cloud)

- the consumer (or the processor) application acts as a listener on the Kafka topic and once it receives the message (asynchronously) it mutates it (adds information on which consumer node processed it) and passes it on to another Kafka topic (you can think of it as the sink)

Producer app details

- Its a Spring Boot application which uses

@EnableBindingand respective configuration to use Kafka as the binder implementation - Uses

@RestControllerand related annotations to set up the REST endpoint

Processor app

- Another Spring Boot app, which uses

@StreamListenerto receive data from Kafka topic which producer app populated and@SendTofor pushing data to another (sink) Kafka topic - It enriches the message/data with the (ACCS) application node which processed it — this will help understand the load balancing concept

In both the cases, the Kafka topics are automatically created behind the scenes by the application itself — this behavior can be altered

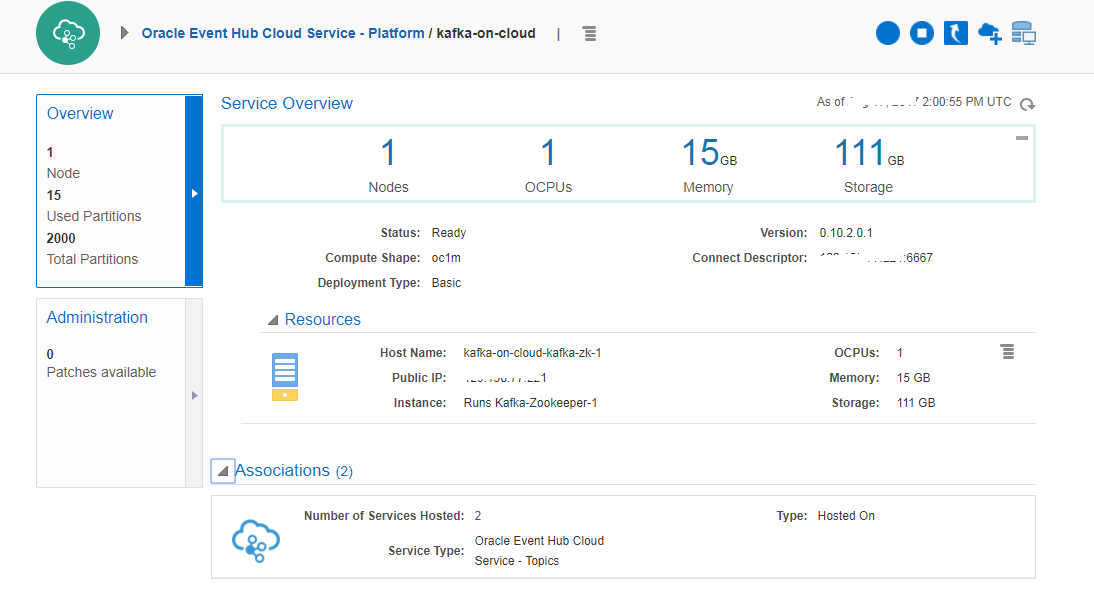

Setting up Oracle Event Hub (Kafka)

We wont go through the entire details since this is straightforward and well documented. All you need to do is create a Kafka cluster (in this case we have a single broker, co-located with Zookeeper) — details in the documentation

Creating custom access rule

You would need to create a custom Access Rule to open port 2181, 6667 on the Kafka Server VM on Oracle Event Hub Cloud. This is to allow our processing applications to communicate with Zookeeper and the Kafka CLI

The port for Kafka cluster (6667 in this case) is required for the Kafka CLI to interact with Oracle Event Hub. Oracle Application Container Cloud does not need this port to be opened since the secure connectivity is taken care of by the service binding

Service Binding

Our message processing applications will bind to Kafka cluster using Service Binding feature in Oracle Application Container Cloud

Build & deployment

Build Producer application

git clone https://github.com/abhirockzz/accs-spring-cloud-stream-kafka.gitcd rest-producermvn clean install— The build process will createaccs-spring-cloud-stream-kafka-producer-dist.zipin thetargetdirectory

Build Consumer application

cd consumermvn clean install— The build process will createaccs-spring-cloud-stream-kafka-consumer-dist.zipin thetargetdirectory

Push to cloud

With Oracle Application Container Cloud, you have multiple options in terms of deploying your applications. This blog will leverage PSM CLI which is a powerful command line interface for managing Oracle Cloud services

other deployment options include REST API, Oracle Developer Cloud and of course the console/UI

- Download and setup PSM CLI on your machine (using

psm setup) — details here - modify the

deployment.jsonto fill in the Oracle Event Hub instance name as per your environment (declarative Service Binding capability in action). Here is an example

{

“memory”: “1G”,

“instances”: 2,

“services”: [

{

“name”: “kafka-on-cloud”,

“type”: “OEHPCS”

}

]

}- deploy the producer app —

cd rest-producerandpsm accs push -n SpringCloudStreamProducer -r java -s hourly -m manifest.json -d deployment.json -p target/accs-spring-cloud-stream-kafka-producer-dist.zip - deploy the processor app —

cd consumerandpsm accs push -n SpringCloudStreamProcessor -r java -s hourly -m manifest.json -d deployment.json -p target/accs-spring-cloud-stream-kafka-consumer-dist.zip

Once executed, an asynchronous process is kicked off and the CLI returns its Job ID for you to track the application creation

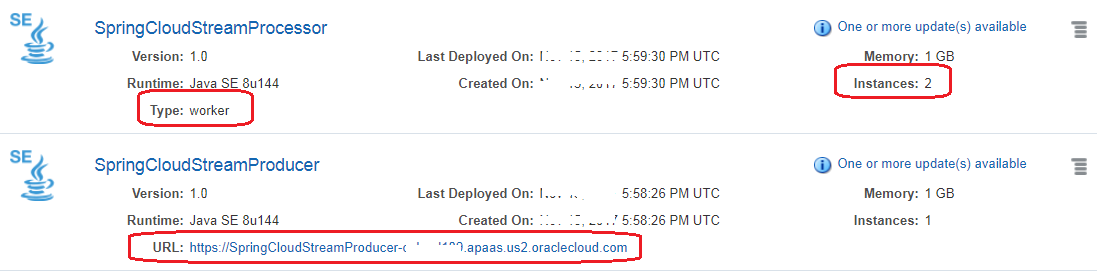

Check your application

Once the deployment is successful, you should see the applications on the console

Note that

- the ‘producer’ app is an internet facing (web) app

- ‘processor’ app is not publicly accessible — it’s a worker application (not exposed to public internet)

- there are two instances of the ‘processor’ (consumer) app to demonstrate the load balancing message consumption

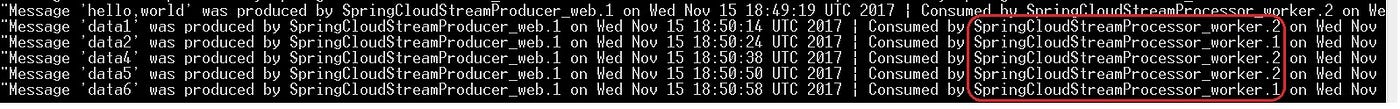

Test drive

Testing this is pretty simple as well

- use the Kafka CLI to register as consumer for

sink_topic

kafka-console-consumer.sh -bootstrap-server <kakfa_broker>:6667 -topic sink_topic

POSTa few messages using the producer app

curl -X POST -H “content-type: text/plain” https://springcloudstreamproducer-<your_domain>.apaas.us2.oraclecloud.com -d hello,world

- the CLI Kafka consumer should get a message similar to the following

Note how (consumer node/instance name highlighted in red) the consumption process is load balanced — thanks to

spring.cloud.stream.bindings.input.groupconfiguration

Now, our entire cycle from producer -> Kafka -> processor -> Kakfa is complete. That’s it for this blog post !

Don’t forget to…

Cheers!

The views expressed in this post are my own and do not necessarily reflect the views of Oracle.

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK